Enhancing User Experience by Overcoming Latency in the ION IQ Chatbot

In our previous deep-dive into the ION IQ Chatbot, we explained the technical details of building our AI chatbot powered by Large Language Models (LLMs). The bot is designed with a focus on ensuring consistent and localized responses using the Augmented Generation (AG) and Retrieval Augmented Generation (RAG) patterns.

While both patterns ensure accuracy through multiple LLM calls, they can lead to increasing latency.

Any application relying on LLMs, like the ION Chatbot, faces a common issue: latency due to LLM calls. This latency, often caused by Agents and Chains implemented with multiple LLM calls, can negatively affect user experience. In this blog post, we share our experience on how we tackle this issue and propose practical solutions that we have found effective.

Our Solutions to Address Latency

Optimizing LLM Calls

Because we are calling an LLM model hosted by Azure OpenAI and not serving our own model, there is nothing we can do about the model itself. Instead, we optimize the calls by:

- Prioritizing the use of smaller LLM calls: Because LLM latency is linear in the output token count1, we will get faster response if the output text is short. Therefore, we can improve the performance if we ask the LLM to only output what we need. We limit the output size by putting instructions in the prompt. We also refine the input prompt such that the LLM only generates necessary information.

- Using Azure OpenAI: We are using GPT-3.5-turbo deployed in Azure OpenAI. Researchers have found that the Azure OpenAI version is faster than the OpenAI version, with up to 20% improvement2

Backend Refinements

To further reduce latency, we also work on other backend improvements:

- Lazy Loading3of Components: Not every user interaction requires the full suite of our components. For instance, simple greetings should not load components used for Microsoft pricing information or Microsoft Sentinel usage data. Through implementing lazy loading, we only engage the necessary components for each interaction.

- Optimized Data Storage: To enhance our storage efficiency for conversation histories, we have transitioned to using AppendBlobs from the more typical BlockBlobs. This approach prevents the need to repeatedly retrieve, modify and overwrite stored conversation data for each interaction.

While most of the latency stems from the LLM calls, these technical solutions, though modest, still play a critical role. Every saved second counts towards user satisfaction.

User Experience Enhancements

In addition to reducing the latency, we have also turned our attention to user experience. These enhancements will not directly reduce the latency, but they can help improve the user experience:

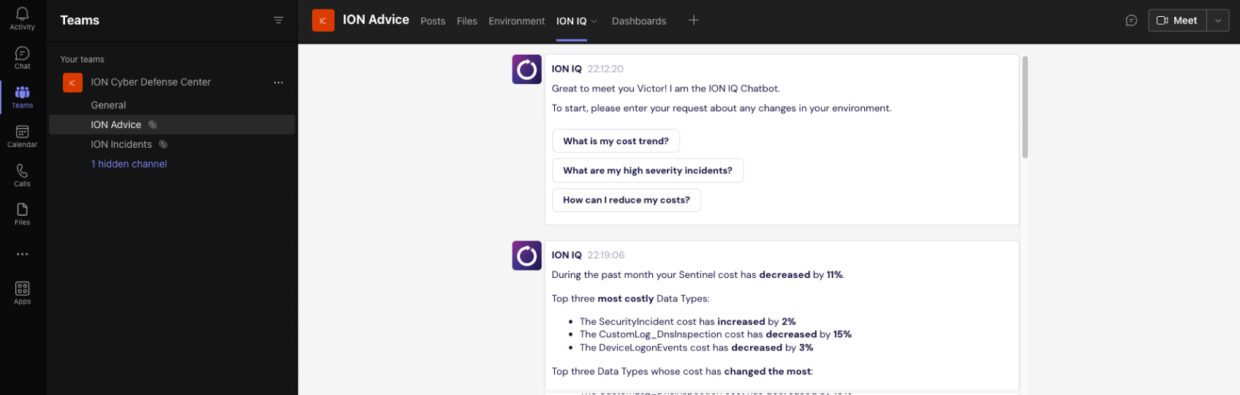

- Backend Activity Indicators: To keep users informed, we have integrated preset routines that display anticipated backend activities, such as “Analyzing your request …,” “Collecting the data …” or “Generating the response …” We are currently working on an even better solution where we show the real activities happening in the backend. These could include which tool was chosen or the KQL query that was executed.

- Cancel and Retry Buttons: This is useful when the chatbot might experience unexpected delays. Users have the option to cancel or retry their request.

- Follow-Up Question Suggestions: To encourage deeper and more efficient engagement and exploration on topics, we have added buttons that suggest follow-up questions.

- Streaming mode: Streaming is showing the LLM-generated response one word at a time. With it, users can start reading the generated content long before the whole response is generated. We plan to enable this mode in the future.

Enabling Customers through Useful Interactions

The ION IQ Chatbot has been carefully designed to ensure consistent and localized response. Recognizing the challenge of latency that comes with the use of LLMs, we have made dedicated efforts to reduce the delay and improve the customer experience.

We have focused on optimizing LLM calls and implementing backend improvements such as lazy loading and optimized data storage. Furthermore, we have tackled the problem from a different angle by introducing user experience enhancements, like backend activity indicators and follow-up question suggestions. They help the users stay engaged and interact with the chatbot more efficiently.

At the end of the day, our commitment is to provide a smooth experience for our customers, ensuring that every interaction with the ION IQ Chatbot is efficient and useful.